A finalized prototype for the Vyo. With different symbols, the robot will execute specific commands. Image courtesy of Guy Hoffman.

Latest News

July 1, 2016

For Cornell University robotics professor Guy Hoffman, new devices demand new engineering approaches. Case in point, a new robotic smart home interface, called Vyo, that he and his colleagues created in collaboration with Korean telecommunications company SK Telecom. Rather than merely a touch screen or smartphone app, the interface takes an unusual form: A social robot. Their design process took an equally unusual turn.

A New Vision for Robots

Hoffman’s vision of robots in the home does not include “little white astronauts,” as he calls the conventional notion of how domestic robots should look. Instead, he says, such robots should “do justice to the term ‘home,’” and blend in with their surroundings, looking more like appliances or pieces of furniture, and behaving in non-human but also decidedly non-robotic ways. “Robots are not human and they’re not to be confused with humans,” Hoffman says. “A natural interface does not mean a human-like interface.”

He and his collaborator, Oren Zuckerman, got a chance to put this vision to the test when managers from SK Telecom approached them in early 2015 to create a new device for the home that would fit in with the company’s strategy for innovation. After considering a number of possibilities presented by Hoffman—who was then co-director of the Media Innovation Lab at the Interdisciplinary Center Herzliya in Israel—the company selected the Vyo concept for development.

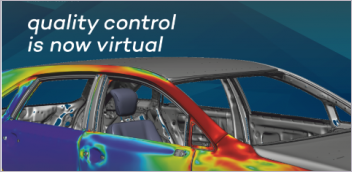

The completed robot prototype stands about a foot tall and weighs about 4 lbs. Its glossy white finish evokes the home appliances it was designed to control. It consists of a flat base on which a user places physical icons, or phicons, representing different appliances that the robot can control. An articulate head peers down intently with a single eye-like circle at the icons, and bobs to acknowledge spoken commands and queries. To adjust the setting of an appliance, a heater for example, the user slides the icon on the tray and sees the adjustments being made on a screen embedded in the top of the robot’s “head.”

For design inspiration, Hoffman drew from the appearance of a microscope he saw in a school supply store window, which he iterated in a series of sketches that he made on an iPad. The iPad sketches were a departure for Hoffman, who had previously used paper for concept sketches. “I very quickly found out that it makes me very productive,” he says of iPad sketching. “I ended up doing a lot of sketches in a short amount of time. Also, they’re much easier to share with my collaborators.”

Next came a series of exploratory animations made with Blender, an open-source animation software. These animation sketches helped the team make choices about where to place the motors that would animate the robot. “The placement of the motors affects the personality of the robot even when it is doing the same movements,” says Hoffman.

Hoffman and his team—which included research assistants Michal Luria and Benny Megidish along with co-principal investigator Oren Zuckerman, and SK Telecom project manager Sung Park—also looked beyond the lab to achieve Vyo’s lifelike movements. It was here that the team’s process took a decidedly unorthodox turn.

Multidisciplinary Design

To further refine the kind of interaction that Hoffman was looking for—where the robot bows differentially and invites interaction with non-verbal communication—the team recruited specialists in disciplines not normally associated with robotics. Actors, for example.

Professional actors role-played a butler and a householder to demonstrate how the butler should behave to be as helpful yet unobtrusive as possible. As a result, a posture of quiet attentiveness became the robot’s default behavior. The actors also informed the robot’s notification behaviors, for example looking around for a user’s help when an appliance needs attention.

The team further refined the robot’s movements with the help of laser-cut wooden components and parts such as joints—designed in Autodesk Fusion 360 and SOLIDWORKS—that were printed on a Stratasys uPrint SE 3D printer. “Printing variations of the robot’s joint attachments enabled to team to quickly explore the robot’s movements with a variety of linkage structures,” says Hoffman.

A professional puppeteer manipulated a puppet made from the wood blocks and 3D-printed joints to enable the team to further experiment with movement. Puppets were also presented to test subjects to collect data on their reactions to specific behaviors and sizes for the robot.

Hoffman says that this kind of far-ranging multidisciplinary approach is crucial for his work that blends the arts and engineering in an effort to create robots that pay more respect to our essential humanity than conventional interfaces and devices. “In some ways, you can do everything on a smartphone today,” says Hoffman. “But that reduces the human-machine interaction to tapping your finger on a glass screen and watching colorful animations. In my mind this doesn’t comprise the full human experience.”

Robotic Ambassador to the Future

The team incorporated their findings from the acting improvisation, puppetry and user testing into the software, structure and electronics of the finished prototype. They built the prototype gradually, replacing the wooden parts as the structural elements were designed and printed one at a time. For control, the team picked the Raspberry Pi 2 Model B computer and developed the software in Java and Python. The team had to code control software for the motors because they didn’t exist for the Raspberry Pi.

Vyo’s camera is designed to detect faces as well as phicons and allows it to respond to people who approach it. The 1.8-in. LCD screen, exposed to the user through a bowing gesture designed to evoke grace, display the status of devices under the robot’s control. A speaker provides spoken notifications. Dynamixel MX-64, MX-28 and XT-320 motors drive the robot’s five degrees of freedom. Five layers of paint and sanding give Vyo a finished appearance.

A finalized prototype for the Vyo. With different symbols, the robot will execute specific commands. Image courtesy of Guy Hoffman.

A finalized prototype for the Vyo. With different symbols, the robot will execute specific commands. Image courtesy of Guy Hoffman.Hoffman and his team completed Vyo in November 2015 to an enthusiastic reception at SK Telecom headquarters in Seoul.

Hoffman—who moved to his current post at Cornell in April—is now at work on follow-up projects, including a companion robot for autistic children and a wearable robotic arm to give users an extra hand. It’s all in the service of creating a better future for both robots and people. “I often hear people say: ‘You try to make robots that are like humans.’ I disagree. I want to make robots that will engage humans in an emotional and respectful way. There’s a difference,” he says.

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Michael Belfiore’s book The Department of Mad Scientists is the first to go behind the scenes at DARPA, the government agency that gave us the Internet. He writes about disruptive innovation for a variety of publications. Reach him via michaelbelfiore.com.

Follow DE