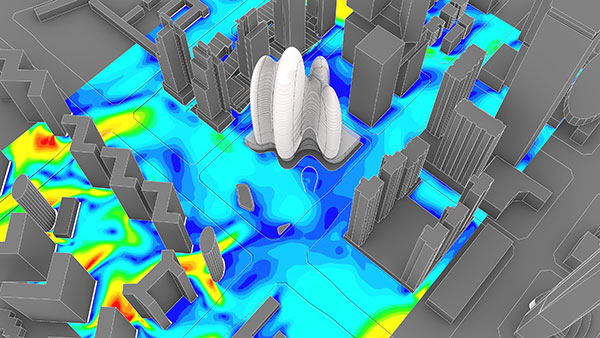

External CFD analysis of wind speed at the pedestrian level around a 200-m skyscraper run on a cloud-native engineering simulation platform. Design by Zaha Hadid Architects; image courtesy of SimScale.

Latest News

December 15, 2021

Physics-based simulation is often used in place of expensive prototyping and physical testing. In many cases, it can supplant physical testing and provide a means of designing and creating products using virtual simulation to mimic reality. This helps drive decisions and is an efficient means of nurturing innovation.

Using Virtual to Create Reality

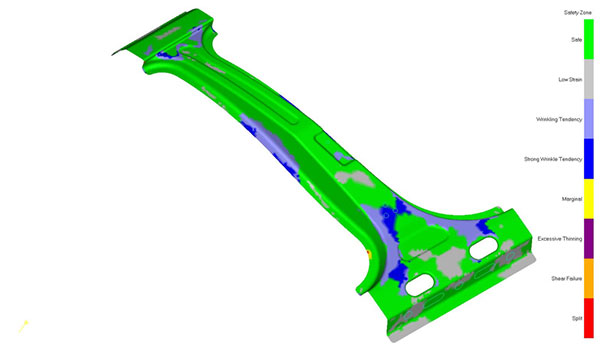

Dan Marinac is the executive director of product marketing for MSC Software’s Hexagon Manufacturing Intelligence. Marinac is upbeat about the benefits of virtual simulation, which has given way to the age of the digital twin—a widely used tool in engineering.

“Physics-based simulation is the perfect substitute for prototyping products and objects,” says Marinac. “A digital twin is a virtual model of a process, product or service. The simulation of the physical world with a virtual model, the digital twin, allows analysis of data and monitoring of systems and processes to predict problems before they occur, assess risks, prevent downtime and develop new opportunities for greater efficiency and safety.”

Safety Zone analysis identifies manufacturing formability risk assessment such as splits (red) and wrinkles (blue) for designers. Image courtesy of Hexagon.

Digital twins consist of three main components: the physical object in the real world (measurement data from internal sensors), a high-fidelity virtual object in the digital world (world-class multiphysics and co-simulation) and the connection between the real and virtual objects via data and information, Marinac notes.

David Heiny is the CEO and co-founder of SimScale. He says multiphysics simulation is well-positioned to witness and facilitate a convergence between simulation and testing as users can perform it through a cloud-native simulation tool.

He also points to simulation’s ability to run the same design under different operating conditions and variations to explore the full design space and assess the effects of design changes, with respect to the expected performance.

“This allows designers to focus on the parameters that really make a difference to the final design,” says Heiny. “For certain applications where the physics and materials are well defined, the design is driven by simulation insights, and the changes are incremental to an initial configuration operating in the field, simulation, if run in the cloud, can prove to be a valid substitute for physical prototyping. Of course, existing regulations and industry practices may still require the execution of physical tests, but in this case, engineers can be sure that they got their design right on the first try.”

Stephen Hooper is the vice president and general manager of Autodesk Fusion 360. He says there are indeed limitations to physical prototyping. He warns that design processes that rely on simulation also have limitations.

“Physical prototyping has enormous limitations and is rarely successful in providing timely feedback that can be used to influence dramatic change in a design,” says Hooper. “That said, users shouldn’t leverage simulation as a substitute for current design processes that work perfectly—it’s a great complement but not a silver bullet. By incorporating simulation as a complement to existing processes and toolsets, companies can engineer better products, which embody additional value and capabilities.”

Accuracy and Precision

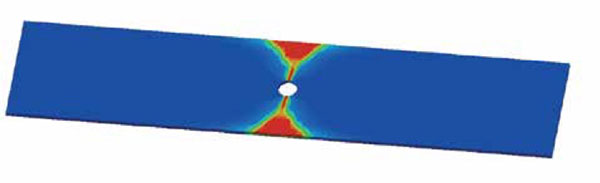

Greg Brown is a product management fellow at Onshape and has worked for PTC for some time now. He believes that artificial intelligence (AI) and machine learning (ML) are key ingredients for accurate multiphysics simulation.

“AI, ML and simulation go hand in hand, and all are decidedly not new technologies,” explains Brown. “However, their promise has generally exceeded their practical value up to this point.”

Open-hole tensile test can be predicted by Digimat. Red zones indicate full fiber damage. Image courtesy of Hexagon.

As Brown sees it, to date he says that even as the precision and scope of physics has been refined and expanded over time, mainstream engineers focused on the consumer products, heavy industry and automotive categories have been underserved.

“The holy grail for software vendors has always been to tap into the very large ‘designer simulation’ market, and for one reason or another this has eluded most attempts,” Brown adds. “On the other hand, a confluence of technologies that have emerged in the last couple of years, including but not limited to graphics processing unit-based solvers, cloud and [software-as-a-service] implementations, and accessible AI and ML, could change the game for designer simulation.”

Dr. Larry Williams, distinguished engineer with Ansys, emphasizes accuracy in simulation. If your simulation is not accurate then there really is no point to use it in the first place.

“Many simulators today, especially those based on finite elements, are what we call deterministically accurate,” says Williams. “They solve the underlying physics and converge to the unique solution. So now we can trust accurate physics modeling but then there’s the issue of material properties and accurate materials modeling. The numerics will provide accuracy if you have as input the proper material properties like dielectric permittivity, specific heat, mass density, Young’s modulus and viscosity.”

Practical Use Cases

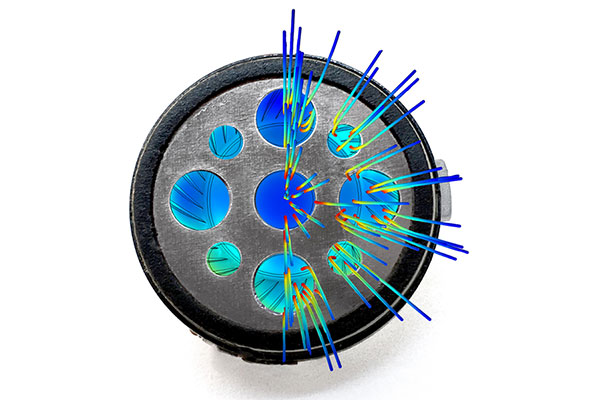

There are numerous cases of the practical use of multiphysics simulations that help build safety and contribute to the innovation of new designs.

“Nuclear safety is one highly important area in which simulation has been frequently used,” says Phil Kinnane, senior vice president of sales for COMSOL. “A group at Oak Ridge National Laboratory (ORNL) is required to use validation according to regulations and procedure. This is for their own confidence in the software, COMSOL Multiphysics, but also because the formal nuclear-safety-related calculation process, as controlled by the DOE, requires such. Yet, the testing is difficult in the first instance and formalized and rigid in the second, such that the validation criteria may not adequately describe real-world behavior.

“Therefore, ORNL is also forced to perform verification of their models, where confidence is built by being able to trust that the software and hardware to perform such simulations will always provide the same answer,” Kinnane says. “With enough confidence in the underlying physics used to describe the physical system, they can have the same confidence that simulating the physical system will always be accurate.”

Brett Chouinard, chief technology officer at Altair, highlights the use of multiphysics simulation in vehicle crash testing. It used to be that you would have to crash several hundred cars in a car program.

Today, he says, you can crash very few vehicles, if any, because much of it is simulated, including airbag deployment and many other aspects of the crash such as the seatbelt system being passive and sometimes active. But it does take some planning to decide what should be simulated.

Similarly, Gert Sablon, senior director of Simcenter Testing Solutions at Siemens Digital Industries Software, says some simulation methodologies may lack the realism to deliver prediction-capable models.

“Simulation models are essential for complex product development or for use in embedded software to enable smart behavior,” says Sablon. “Test departments play a crucial role in filling the current gaps. This goes way beyond measuring accurate data for standard structural correlation and model updating.

“Testing allows companies to explore uncharted design territories and build knowledge about new materials and all the additional parameters that come with mechatronic components,” he adds. “This requires specialized, high-precision tools. And it adds an enormous amount of validation work for test departments on top of their standard tasks, which already are performed under growing time pressure. Those testing areas include prototype validation and certification.”

Impact on Product Behavior

Sablon says that time is money, especially late in development. Prototypes and testing infrastructure can be costly and any delay at this stage directly impacts the product’s market entry.

“Companies dictate extremely tight schedules and fear discovering defects that lead to late repairs and recurrent prototyping,” says Sablon. “This step is indispensable for any product to go into operation. And with increasing product complexity, including after-delivery updates, the share of work in this area can be expected to grow, including many more product variations, parameters and operating points. Therefore, test departments need solutions that can effectively handle projects of any scale and provide immediate and profound insight into product behavior.”

More Ansys Coverage

More Autodesk Coverage

More COMSOL Coverage

More Hexagon MSC Software Coverage

More Onshape Coverage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Jim Romeo is a freelance writer based in Chesapeake, VA. Send e-mail about this article to DE-Editors@digitaleng.news.

Follow DE